Mingled dates still pervasive: the forgotten legacy of Y2K

There is no better time than now, twenty years later to a beat, to reminisce about Y2K.

Most young IT professionals have no recollection of this IT epic. IT veterans won’t mention it on their resumes – it dates them. The Y2K global story (also known as the Millennium Bug) and the Canadian stories of its champions and heroes have long left the front lines of news and corporate agendas, and are now well buried in the depths of Google’s belly.

The story is this: once “19”, the default century (in use since the beginning of… IT times) would become “20”, the pervasive use of dates without century was to derail all calculations based on dates. The global threat of miscalculated dates would render systems vulnerable to all sorts of erroneous or negative dates, division by zero and other calamities that were expected to hit almost all IT applications, causing deep havoc with everything from banking and finance to manufacturing, transportation, power lines and embedded devices. The sky was to fall!

But a huge IT army, BAs and PMs at the forefront, was quickly assembled and mobilized on the Y2K front to battle the problem. A Y2K industry sprung up, and deficient dates were detected and converted to a century explicit format by smart tools and industrious programmers working overtime. Millions upon millions of lines of code were repaired in sophisticated specialized centers, and thousands upon thousands of software applications were then being tested. Hundreds of millions of dollars were being spent. And the Y2K army was victorious – to the point that the whole Y2K issue was later labeled a mere “scare” and deemed a scheme to extort lucrative business for the IT industry!

The Millennium Bug is not to be blamed on programmers’ oversight; at one time, memory space was at a premium, so dropping the century was a smart idea. And no one thought that the code they were writing in the 60’s, 70’s, 80’s or even later would still be running into the next millennium!

Today, in the continuous rush to invent and implement new methodologies and technologies, no one seems inclined to reflect on and learn from the IT lessons of the past. No wonder old problems are resurfacing with deplorable frequency and irritating (or worse!) results – think ambiguous dates on medicine packaging or canned food.

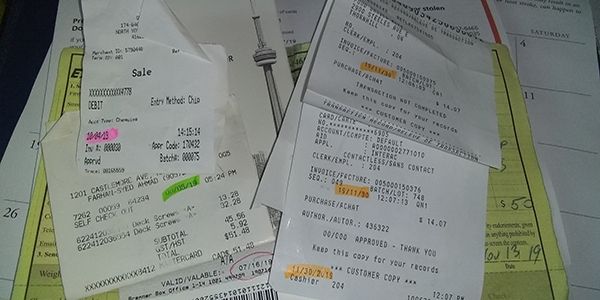

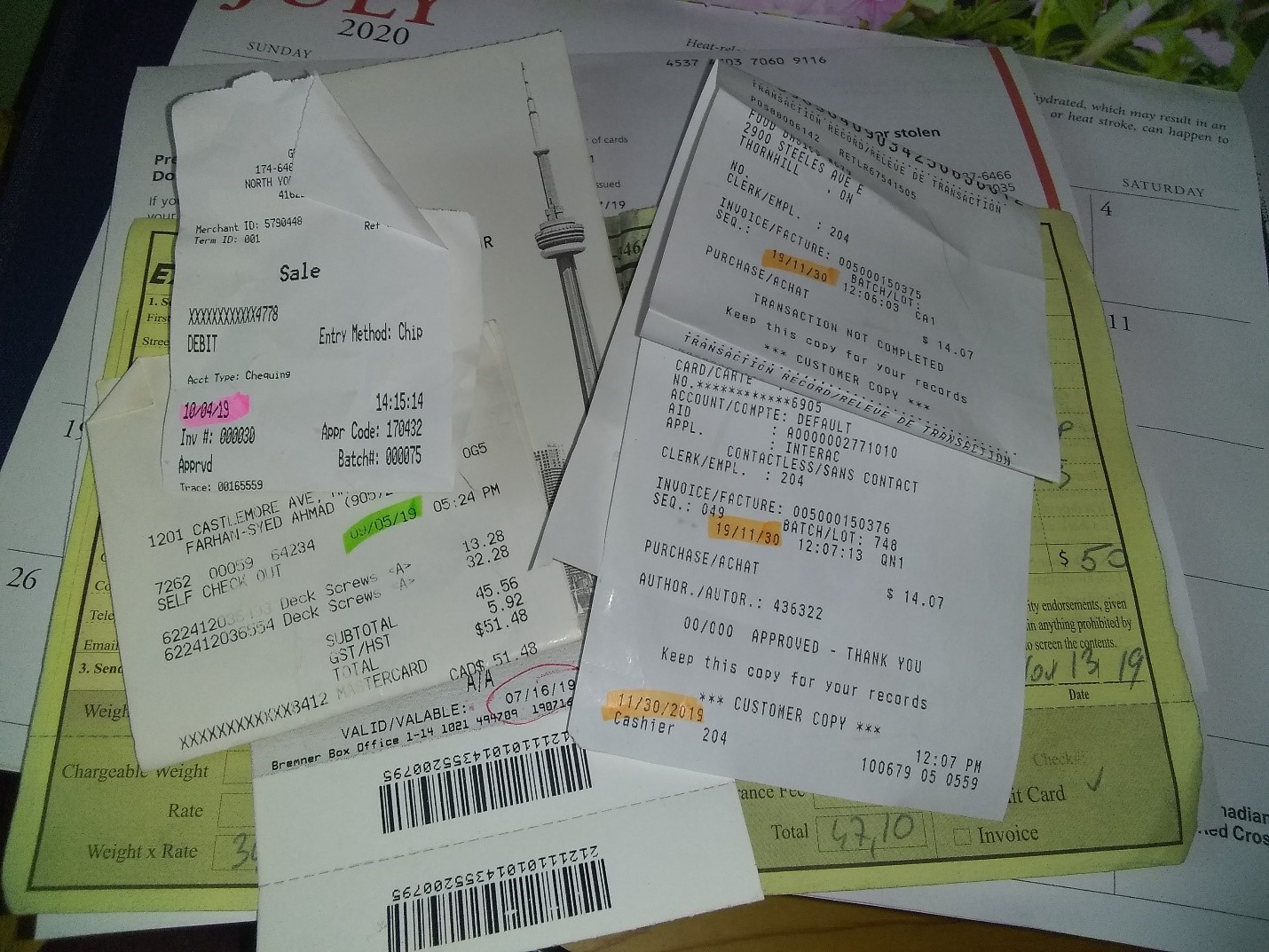

Without attempting a short history of date formatting, it can be said that once we stepped into the current millennium, dates have become potentially confusing. If something like “03/02/96” can have only two possible meanings, a date such as “12/11/09” presents more than two valid interpretations. Context is necessary in order to establish the intended date, and if that context is missing, misinterpretations are likely. It’s 2019, and I am staring at an assortment of dates I cannot easily confirm; did I purchase this item in May 15 of last year, or was it November 18 of 2015? or does this invoice date back to 2011? While this is a relatively minor nuisance, the fact that one of the biggest banks currently requests that a date be filled as “mm/dd/yyyy” on one of their forms, and as “dd/mm/yyyy” on a form from another department signals that the date formatting issue is alive and well, likely entrenched much deeper than in customer facing artefacts.

The convention “mm/dd/yy” is specific to North America but its consistent usage is not a given on the continent; for example, sorting is easier with dates formatted as “yy/mm/dd” (or rather “yyyy/mm/dd” to ensure cross-millennia portability). Other jurisdictions have different (better!) formats, the ISO 8601 standard for date formatting was published back in 1988, and despite the fact that we now live in a globalized world, a unique standard for date formatting is still not a reality in practice.

This is astonishing as it is irritating because twenty years ago, the whole IT world struggled hard with the problem of century-less dates. But a unique standard of formatting dates failed to emerge and take hold even after this epic effort which was supposed to serve a lesson. Today it is as if Y2K never happened, century-less dates are frequent and everyone seems to be accepting whatever dates are spit out on screens, documents or merchandise packaging.

Why is the basic requirement of a universally intelligible date lost? Why is the Y2K story lost? Was all that effort in vain? Troubling questions! If as IT and BA professionals we don’t learn from our own experiences, similar mistakes will be made. Is the cause to be found in short memory at institutional level and a lack of preoccupation (funds) for preserving it? Is it the pressure to deliver in the short term for short lived applications? Is there a belief that AI and other technological advances make such a mundane requirement irrelevant? Is it a deficiency in how IT staff is trained? Is the BA profession itself guilty of not harvesting and consuming its own history?

The “good” news is that once we pass the year 2031, the problem will alleviate considerably by itself (32, 33, etc. can no longer pass for a month or day of month), and we’ll be back to the “date care-free” days of the nineteen nineties and before. However, this will be somewhat short lived, as we’ll be hit again in seven thousand nine hundred and eighty years from now, as the world will hopefully step into the year 10,000 (time flies…). Will the old assumption that no present system could still be running serve us well?