Waterfall vs. Agile: A Relative Comparison

The merits of Agile versus Waterfall are well documented. However, it is useful to understand the relative differences between the paradigms and how they impact Business Analysis – particularly if you work in an environment where both approaches are used.

This article attempts to provide a visual, relative comparison between:

- A traditional Waterfall method that moves through defined phases that include Requirements, Design, Implement and Verify, with a defined gateway denoting the point at which the method moves from one phase to the next.

- An Agile method that aligns with the 12 principles of the Agile Manifesto.

The approaches are compared across three areas that matter to many Business Analysts:

- The relative effort is involved in specifying and managing requirements.

- The relative risk posed by ill-defined requirements.

- Time to realize benefits.

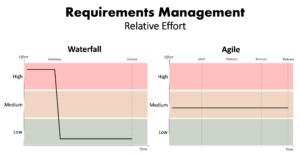

Requirements Management

The timeline for discovering, specifying, and managing requirements differs greatly between the two approaches. A traditional Waterfall approach includes the requirements phase early in the initiative where the focus is on requirements specification and management activities. At the end of this phase, the ability to change requirements is limited. Therefore, most of the effort to elicit and manage requirements happens during this early phase.

By comparison, requirements elicitation and management activities for an Agile initiative are more evenly distributed over the life of the initiative as requirements are constantly reviewed, updated, and prioritized.

The relative requirement management effort over time for each approach is shown in Figure 1.

Figure 1 – Waterfall vs. Agile: Relative requirements management effort over time

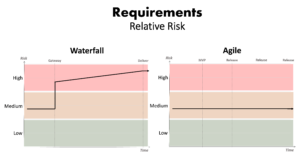

Relative Risk

Missing, incorrect, and/or otherwise ill-defined requirements put the delivery of fit-for-purpose products at risk. However, the relative risk associated with ill-defined requirements is quite different when comparing Waterfall and Agile approaches.

The risk posed by ill-defined requirements for a traditional Waterfall approach is lower during the requirements phase of the initiative as this is the time when requirements can be added and changed without impacting other areas. After this phase, the risk posed by ill-defined requirements dramatically increases and continues to increase for the duration of the initiative. By comparison, the risk posed by ill-defined requirements to Agile approaches is largely constant throughout the initiative. Figure 2 shows the relative risk of both approaches side-by-side.

Figure 2 – Waterfall vs. Agile: Relative risk posed by ill-defined requirements over time

Figure 2 – Waterfall vs. Agile: Relative risk posed by ill-defined requirements over time

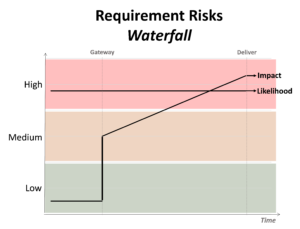

However, it is worth analyzing the relative risk by its constituent components – the likelihood requirements will be ill-defined and the impact of having ill-defined requirements.

For a traditional Waterfall approach, all the effort to elicit and document requirements happens at the beginning of an initiative with limited mechanisms in place to revise or revisit requirements in later stages – regardless of what new information may come to light. This means that it is comparatively more likely (i.e. higher likelihood) that there will be ill-defined requirements. The likelihood of having ill-defined requirements is fairly consistent throughout the initiative as it is the result of a constraint imposed by the methodology.

Advertisement

In contrast, the impact of ill-defined requirements is low for Waterfall approaches during the initial requirements phase of the project as this is when there are mechanisms in place to actively review and change requirements. After this point, the impact of ill-defined requirements can increase quite dramatically (particularly for initiatives that involve the procurement of resources and/or base products as per the requirements as part of the next phase) and continues to increase throughout the life of the initiative. This is because the cost of changing products increases as the initiative progresses through the design, implement and verify phases. This is demonstrated in Figure 3.

Figure 3 – Waterfall: Likelihood and impact of ill-defined requirements over time

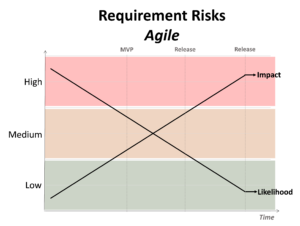

In comparison, Agile methods include mechanisms to incorporate new information into requirements throughout the life of the initiative, meaning the likelihood of ill-defined requirements decreases as the initiative progresses. By comparison, the impact of ill-defined requirements increases over the life of the initiative as products are incrementally released. This is shown in Figure 4.

Figure 4 – Agile: Likelihood and impact of ill-defined requirements over time

Figure 4 – Agile: Likelihood and impact of ill-defined requirements over time

By the end, the impact of ill-defined requirements on comparable initiatives is relatively similar for both Waterfall and Agile methods – it is the likelihood that contributes to the overall difference in relative risk.

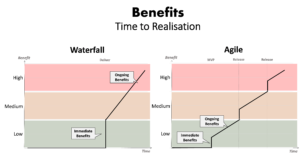

Benefits Realisation

Another key point of difference between Waterfall and Agile methods is when benefits are realized. For Waterfall initiatives, benefits cannot be realized until after its core products are fully delivered. There is limited opportunity for early benefits realization in traditional Waterfall initiatives. By comparison, Agile methods offer opportunities to realize early benefits with the incremental delivery of products, as demonstrated in Figure 5.

Figure 5 – Waterfall vs. Agile: Relative time to the realization of benefits

Figure 5 – Waterfall vs. Agile: Relative time to the realization of benefits

Why does it matter?

So why is it useful to understand the relative differences between Waterfall and Agile approaches? There are a few ways increased understanding of the relative differences between methodologies can help, including:

- Resource Planning – helping you to plan and allocate resources where they are needed most based on the approach being used.

- Communication – better able to describe the relative merits and risks of an approach to stakeholders.

- Arguing your case – provide some talking points to help you argue for an alternative approach.

- Assessing alternatives – provide a basis for assessing alternative approaches and for tailoring methodologies.

Conclusion

This article has provided a relative comparison between Waterfall and Agile across three areas. In comparing Waterfall and Agile paradigms in relative terms this article does not seek to promote one over the other – both have their place. In addition, this analysis has not accounted for all the available variations, flavours, and mashups of approaches. However, understanding the relative differences between the basic paradigms can assist when preparing and planning to work with a specific methodology – particularly in environments where analysts may be expected to work with multiple different approaches.

Resources:

- Manifesto for Agile Software Development, agilemanifesto.org (Accessed July 2021)

- Asuni, Oluwakorede, Design Thinking in a Waterfall World, batimes.com/articles/design-thinking-in-a-waterfall-world/, June 2020 (Accessed July 2021)