Exploring Quality Attribute Requirements

In an ideal universe, every product would exhibit the maximum value for all quality attributes. The system would always be available, would never fail, would instantly supply results that are always correct, would block all unauthorized accesses, and would never confuse a user. In reality, trade-offs and conflicts between certain attributes make it impossible to simultaneously optimize all of them. You have to determine which attributes are most important to your project’s success and state specific objectives for them so designers can make appropriate choices. This article describes an approach for identifying and specifying the most important quality attributes for your project, adapted from Jim Brosseau’s method (“Software Quality Attributes: Following All the Steps,” 2010, www.clarrus.com/resources/articles/software-quality-attributes).

Step 1: Start with a broad taxonomy

Begin with a rich set of quality attributes to consider, such as those listed in Table 1. This broad starting point reduces the likelihood of overlooking an important quality dimension.

Table 1. Some external and internal software quality attributes

| External Quality | Brief Description |

| Availability

Installability Performance Usability |

The extent to which the system’s services are available when and where they are needed How easy it is to correctly install, uninstall, and reinstall the application The extent to which the system protects against data inaccuracy and loss How easily the system can interconnect and exchange data with other systems or components How quickly and predictably the system responds to user inputs or other events How long the system runs before experiencing a failure How well the system responds to unexpected operating conditions How well the system protects against injury or damage How well the system protects against unauthorized access to the application and its data How easy it is for people to learn, remember, and use the system |

| Internal Quality | Brief Description |

| Efficiency Modifiability Portability Reusability Scalability Verifiability |

How efficiently the system uses computer resources How easy it is to maintain, change, enhance, and restructure the system How easily the system can be made to work in other operating environments To what extent components can be used in other systems How easily the system can grow to handle more users, transactions, servers, or other extensions How readily developers and testers can confirm that the software was implemented correctly |

Step 2: Reduce the list

Engage a cross-section of stakeholders to assess which of the attributes are likely to be important to the product. For instance, an airport check-in kiosk for travelers must emphasize usability (because most users will encounter it infrequently) and security (because it has to handle payments). Attributes that don’t have much impact on your project’s success need not be considered further.

Step 3: Prioritize the attributes

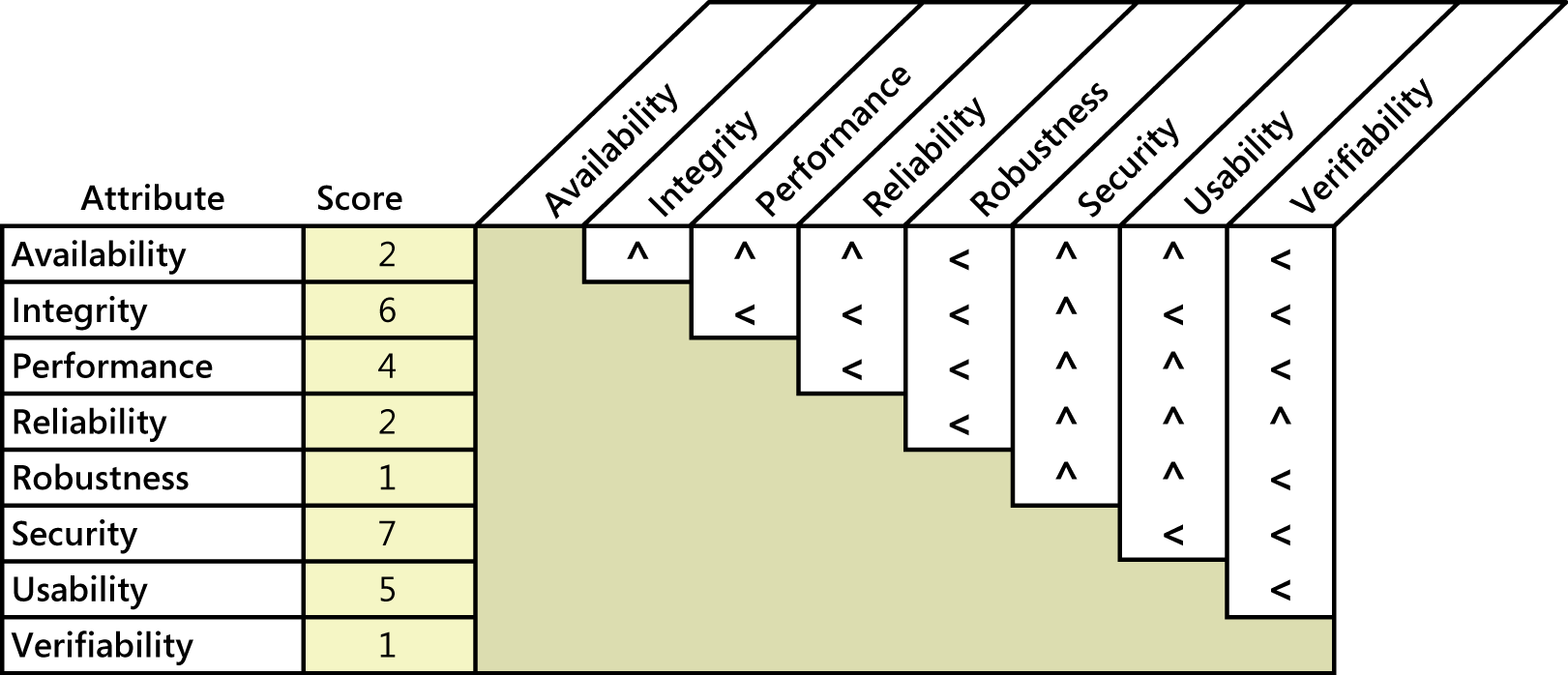

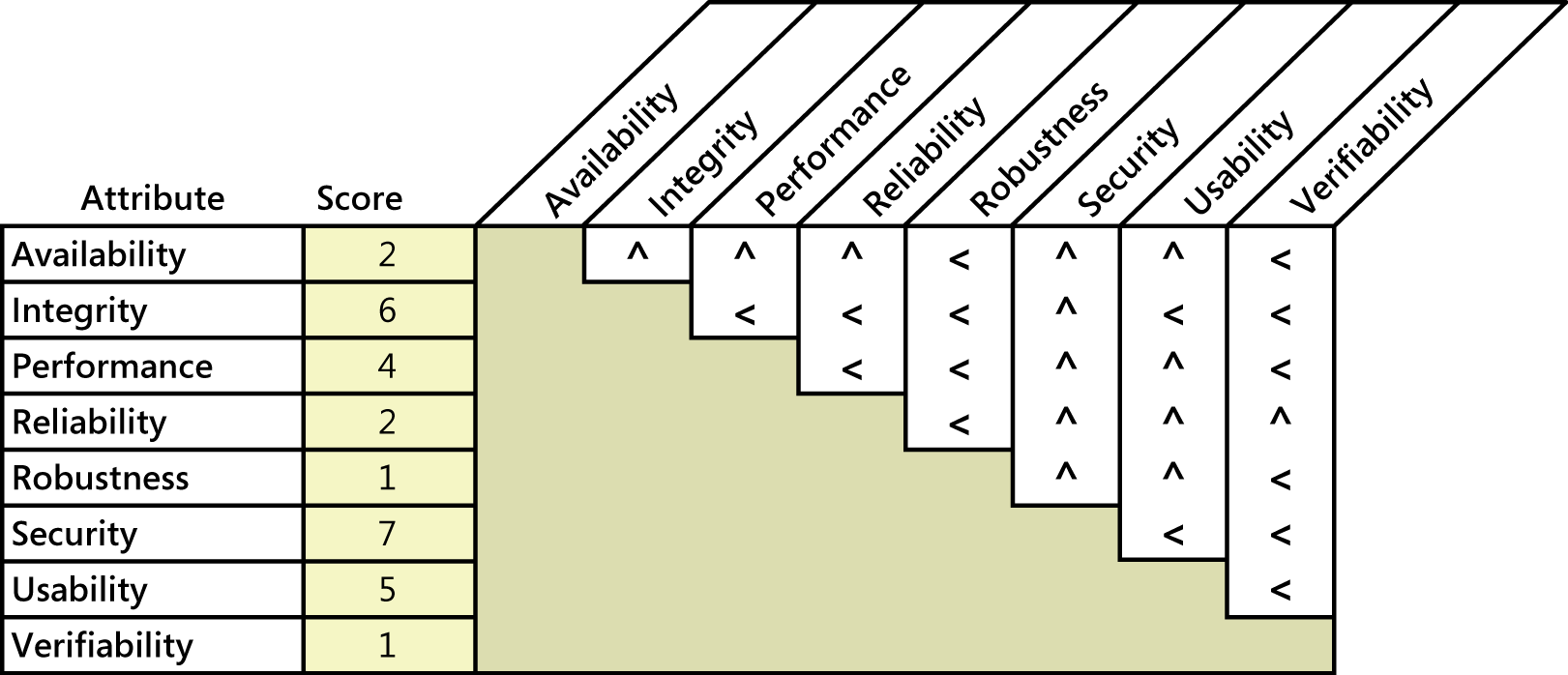

Prioritizing the pertinent attributes sets the focus for future elicitation discussions. Pairwise ranking comparisons can work efficiently with a small list of items like this. Figure 1 illustrates how to use Jim Brosseau’s spreadsheet (at www.clarrus.com/resources/articles/software-quality-attributes) to assess the quality attributes for an airport check-in kiosk. For each cell at the intersection of two attributes, ask yourself, “If I could have only one of these attributes, which would I take?” Entering a less-than sign (<) in the cell indicates that the attribute in the row is more important; a caret symbol (^) points to the attribute at the top of the column as being more important. For instance, comparing availability and integrity, I conclude that integrity is more important. The passenger can always check in with the desk agent if the kiosk isn’t operational, but passengers will be very unhappy if the kiosk doesn’t show the correct data. So I put a caret in the cell at the intersection of availability and integrity, pointing up to integrity as being the more important.

Figure1. Sample quality attribute prioritization for an airport check-in kiosk.

Figure1. Sample quality attribute prioritization for an airport check-in kiosk.

The spreadsheet calculates a relative score for each attribute, shown in the second column. In this illustration, security is most important (with a score of 7), closely followed by integrity (6) and usability (5). Though the other factors do indeed contribute to success—it’s not good if the kiosk crashes halfway through check-in—the fact is that not all quality attributes can have top priority.

Prioritization helps you focus elicitation efforts on the key attributes and helps you know how to respond when you encounter conflicting requirements. In this example, elicitation would reveal a desire to achieve specific performance goals, as well as some specific security goals. These two attributes can clash because adding security layers can slow down transactions. Because the prioritization revealed that security is more important (with a score of 7) than performance (with a score of 4), you should bias the resolution of any such conflicts in favor of security.

Step 4: Elicit specific expectations for each attribute

Users won’t know how to answer questions such as “What are your interoperability requirements?” or “How reliable does the software have to be?” The business analyst must ask questions that explore the users’ expectations and lead to specific quality requirements that help developers create a delightful product. For instance, following are a few questions a BA might ask to understand user expectations about the performance of an information system that manages patent applications that inventors have submitted:

- What would be a reasonable response time for retrieval of a typical patent application in response to a query?

- What would users consider an unacceptable response time for a typical query?

- How many simultaneous users do you expect on average?

- What’s the maximum number of simultaneous users that you would anticipate?

- What times of the day, week, month, or year have much heavier usage than usual?

Consider asking users what would constitute unacceptable performance, security, or reliability. That is, specify system properties that would violate the user’s quality expectations, such as allowing an unauthorized user to modify a file. Defining unacceptable characteristics lets you devise tests that try to force the system to demonstrate those characteristics. If you can’t force them, you’ve probably achieved your quality goals.

Step 5: Specify well-structured quality requirements

Simplistic quality requirements such as “The system shall be user-friendly” or “The system shall be available 24×7” aren’t useful. The former is far too subjective and vague; the latter is rarely realistic or necessary. Neither is measurable. So the final step is to craft specific and verifiable requirements from the information that was elicited regarding each quality attribute. When writing quality requirements, keep in mind the SMART mnemonic—make them Specific, Measurable, Attainable, Relevant, and Time-sensitive. If a quality requirement isn’t measurable, you’ll never be able to determine if you’ve achieved it. The notation called Planguage (described in Chapter 14 of Software Requirements, 3rd Edition) facilitates precise specification of quality attribute requirements.

Satisfying the user’s quality expectations is a powerful contributor to software project success. A wise BA will systematically explore quality attributes as diligently as she elicits the system’s functional requirements.

Don’t forget to leave your comments below.

========================================

About the Authors:

Karl Wiegers is Principal Consultant at Process Impact, www.processimpact.com. Joy Beatty is a Vice President at Seilevel, www.seilevel.com. Karl and Joy are co-authors of the recent award-winning book Software Requirements, 3rd Edition (Microsoft Press, 2013), from which this article is adapted.