Fighting for Priority

Fighting for Priority

Any project manager or business analyst that works with scoping requirements and planning development cycles, knows the pain of prioritizing those requirements. Ask five different stakeholders and get five different priorities. While this is painful when first planning the project, the pain increases each time there is a change in stakeholders or requirements. Prioritization often ends up being handled as a subjective process, open to the whims of whoever has the responsibility or yells the loudest.

What if there was a way to make prioritization more objective rather than based on opinions?

Cube Priorities

We use cubes in many parts of project management from risk management to stakeholder management. We can use something similar to help in prioritizing requirements.

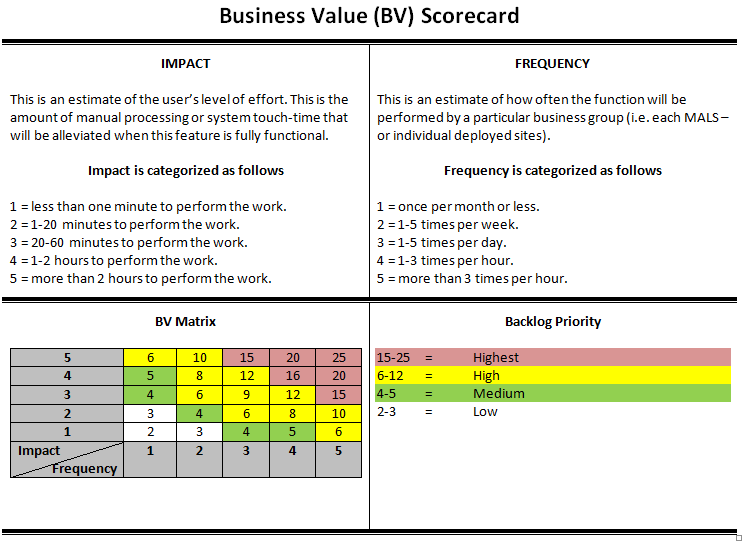

To help in our backlog planning, we created a 5×5 “Business Value Scorecard.”

This card allowed us to calculate scores from 2-25, and those scores could then be grouped as shown to create priorities. In our backlog we tracked both the Impact and Frequency scores and the Business Value (BV) score. Artifacts or requirements can then be ranked by this score.

An important thing to note from our experience is that this scorecard should not be used as gospel when prioritizing requirements. We used it for two specific purposes. First, was the initial ranking of our requirements. Second, if stakeholders disagreed with the ranking that was applied, it provided a specific discussion of impact and frequency to focus on. Stakeholders would have to argue more objectively on the facts rather than just “I think” or “I’ve heard.” It is possible that impact and frequency scores could change based on facts that were presented during these discussions. If that was the case, then these scores could be adjusted to then see the effect on the BV score.

Scorecard Adjustments

The card I’ve included here is an example of one of our cards. Using this example as a starting point, you can design it to be more useful to your organization or projects. The important thing is to decide how best to scale or label, and then be consistent for your project. Changing how you score requirements during a project means that when comparing new requirements to old requirements, you might be prioritizing apples and oranges.

Advertisement

Thoughts on Impact

In our BV Scorecard, we used time as a measurement of impact. How much time would be saved if the requirement is implemented. If the requirement was something that added the ability to scan a code rather than manually enter it, then the time savings might only be a minute or so for each entry. If it is compiling a report from manual entry spreadsheets using a manual process, then automating that process may save an hour or more each time the report is generated. It is also important to consider that “impact” doesn’t have to be savings in time. It can be increase in earnings, increase in savings, decrease, or increase in customers served. This impact can be a decrease in customer use (e.g., help desk) or increase in customers served (e.g., check-out in a retail store).

Whatever you use as your impact, it is important how you then group those scores. The statistical mean of whatever you want to measure should normally fall into the two or three score range. Scale depends on the range of impact you want. Ours went out to two hours or more since most of our activities fell into that range. It makes no sense to use 1, 2, 3, 4, 5-minute time savings respectively when some of your requirements may actually save hours or days. Our example scale uses 1 minute to 2 hours, but you can modify your scale to better fit the impact range you are working with. Again, the important thing is consistency throughout your project. Don’t change scoring half-way through.

Thoughts on Frequency

How often something happens or needs to happen is also important. It makes little sense to make something a high priority if we only perform that task once or twice a year and would only save a couple of minutes after a requirement is implemented. This is especially true if the “cost” of implementing that requirement is significant. On the other hand, if a task is performed many times an hour or day, then saving a minute or two each time adds up to a significant time savings. This would score high on our priority scale.

If we are using something like earned value or savings, then our time scale may be a little different. If our requirement increases the number of customers, then we can consider that number within a content of hours, days, weeks, etc. Increasing the number of customers by 10 means different things in the context of time.

Thoughts on Priority

While the numbering on the BV Scorecard shouldn’t need to be updated, it is possible to change the color coding based on your priority system. We kept numbers in the same priority, which makes labeling either manually or automated easier. This does tend to cluster around the middle, but considering we used this as our average or medium point this is normal. While we used four groups, it is possible to change this to a three or five group matrix. Again, simply balance out the numbers for your groups.

Final Thoughts

We refined this scorecard over a couple of release cycles, and it worked well for us. Making sure those entering requirements completed the time and frequency questions allowed our PM/BA team to fill in the information and generate a BV score. They then applied an appropriate, initial priority as soon as the requirement was entered into our register. This automated assignment can easily be created in something like Excel using formulas and Conditional Formatting. When conducting our scoping meetings, we would first verify impact/frequency assessments, score, and initial priority. If there was disagreement with the assigned priority, how we got there is a transparent process based on information obtained from the customer. This provided a chance to correct the information if needed. Most of the time if there is disagreement on the final priority, it was due to corrections that needed to be made in frequency and priority. In cases where the information was correct, but there was still disagreement with the assigned priority, we could still manually assign the priority based on the customer’s desire. This was not a common occurrence for us since the assignment was based on something more objective.

I hope that with some modifications to fit your use case that you will find this useful as prioritization tool in your projects!